Bad developers won’t be great with AI

Lower your expectations.

This is “Effective Delivery” — a bi-weekly newsletter from The Software House about improving software delivery through smarter IT team organization.

It was created by our senior technologists who’ve seen how strategic team management raises delivery performance by 20-40%.

TL;DR

With AI, top developers can produce features in 30 minutes,

That’s why IT Managers believe any engineer can reach similar performance,

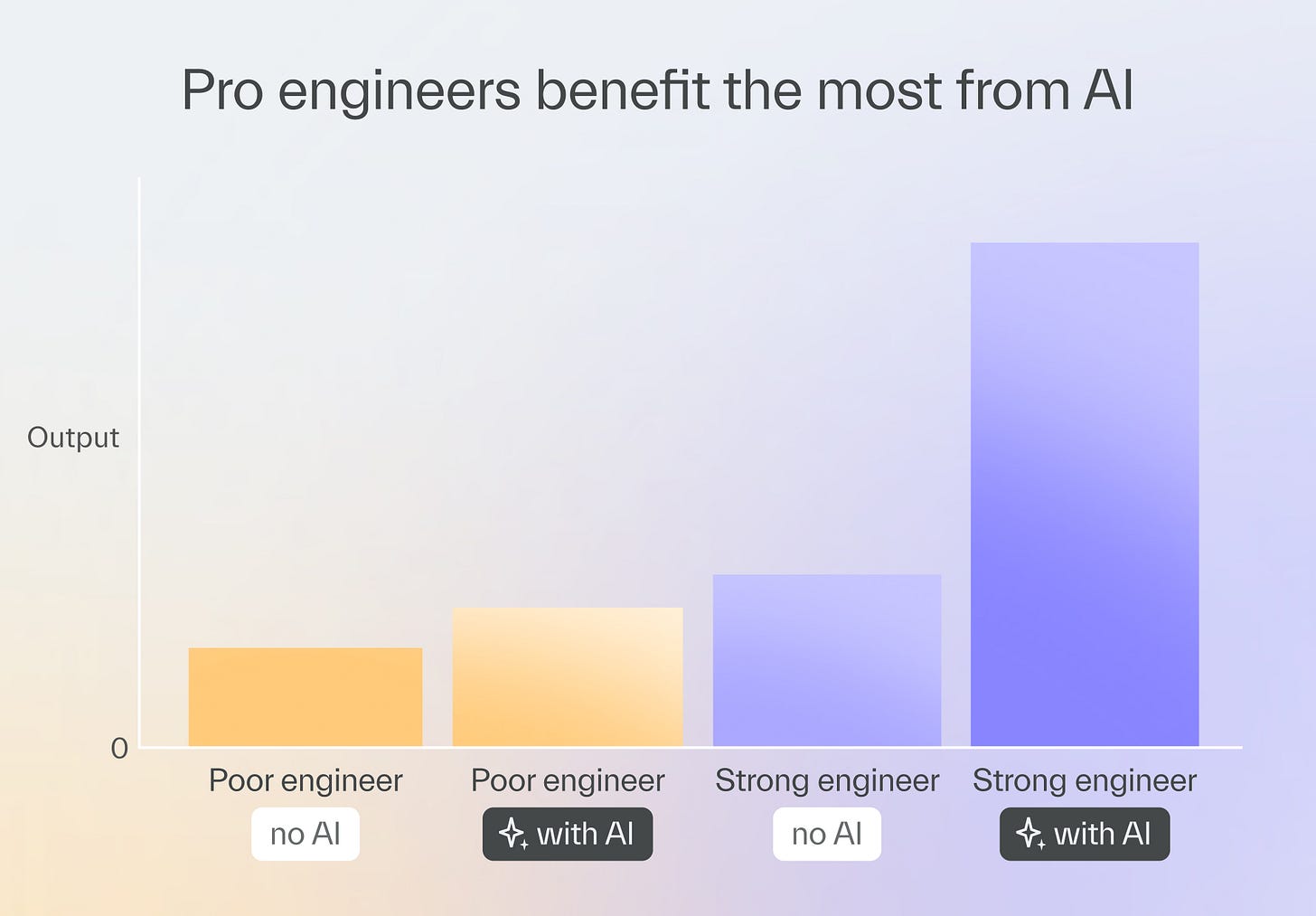

The truth is that AI tools empower great engineers the most,

Problem-solving, high-level overview, and research are key AI skills.

Contents

2. Why bad developers can’t master AI

Hello!

I’m Marek, the COO and former CTO of The Software House.

My good friend Adam, our CTO, has recently shared a story here about our first 100% AI-driven project.

It shows how an AI framework like ours can speed up the life cycle multiple times.

It’s a great read, but it may have left some IT managers with the impression that with AI, you can have a pro team with little to no engineering experience.

I watched this and other AI-driven projects enough to know that it’s not true.

How senior devs use AI

Over the past several months, we have been developing our own AI framework, copilot-collections.

The framework reflects our style in 3 ways:

it’s fed the same knowledge and standards that our developers follow,

the 4-stage workflow it uses is similar to what we do even without AI,

its 12 agents are often digital versions of our top engineers.

The quality is excellent too.

We’ve designed the framework to produce code as technically sound as what we would write manually.

In the last project, we set up SonarQube, the review tool we use, to reject any AI code that didn’t achieve a score of 4.7/5.

But just because our engineers can doesn’t mean everyone can too.

Why bad developers can’t master AI

For all its usefulness, there are reasons no AI framework will produce great results without skilled, dedicated humans behind it, especially in the long term.

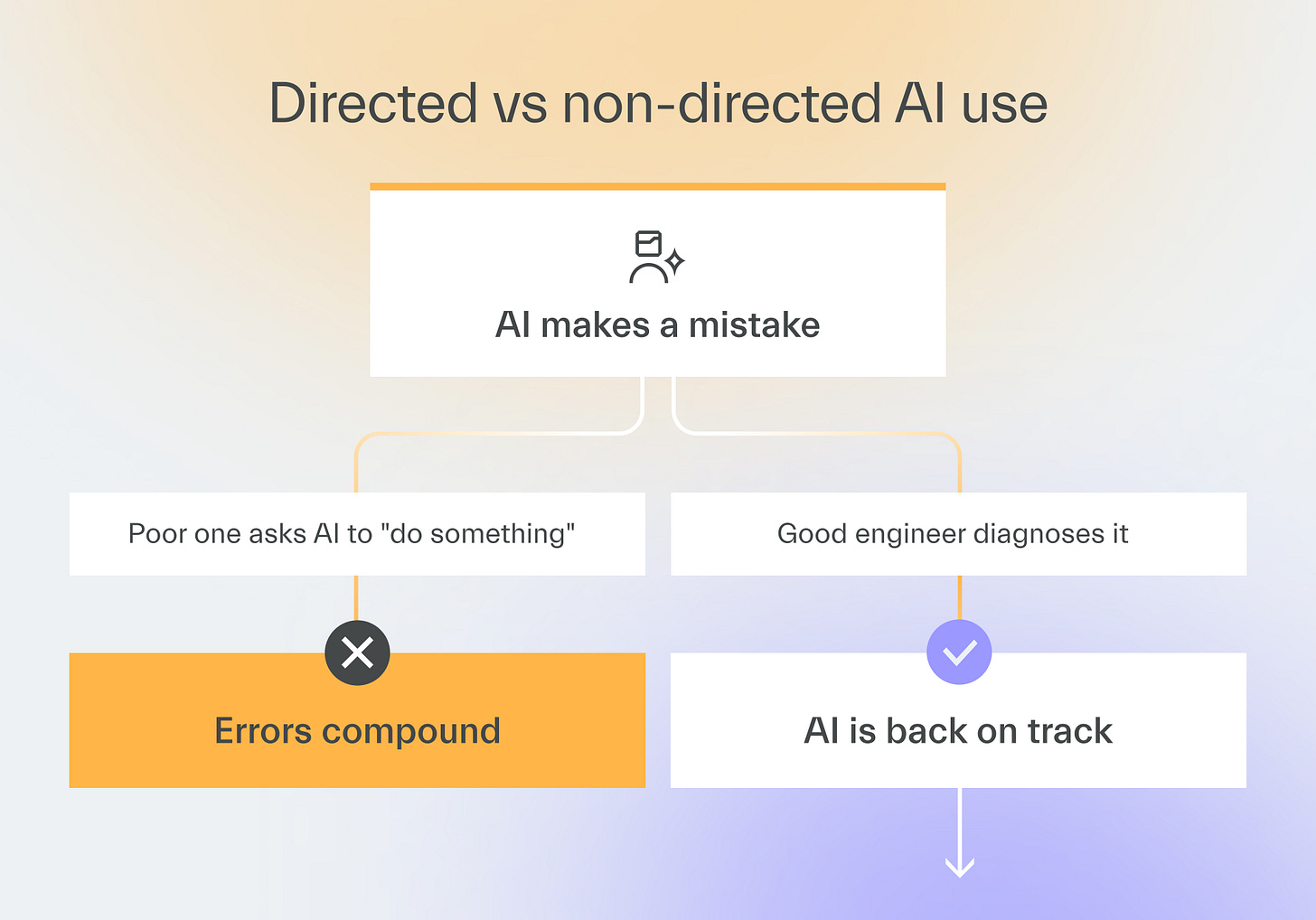

AI doesn’t know what your problem is

When your developer uses copilot-collection’s 4-step development process to make a feature or an app, things may go well at first.

The smooth start lasts until they encounter a problem that requires more than a log or code analysis.

When the problem is not a bug, but a misalignment of intent or a complex architectural failure, AI struggles due to a lack of proper context.

But when a detailed diagnosis is provided, AI can implement it effectively.

One developer couldn’t understand why an AI-coded feature loaded slowly.

Another developer suspected the problem might be in ORM requests and prompted the AI to optimize them specifically.

The performance immediately improved.

AI needs just a little help… but very often

When I watched some of our top engineers work with copilot-collections, I even caught myself thinking the app was almost writing itself.

In fact, that’s what they were telling me as well!

But when I pressed them, I found that they were performing tons of microoperations at every step, which made the outcome possible.

The microoperations include:

Manually changing the generated tasks’ descriptions,

Troubleshooting micro issues,

Quick reviews of the AI output,

Providing the agents with proper access.

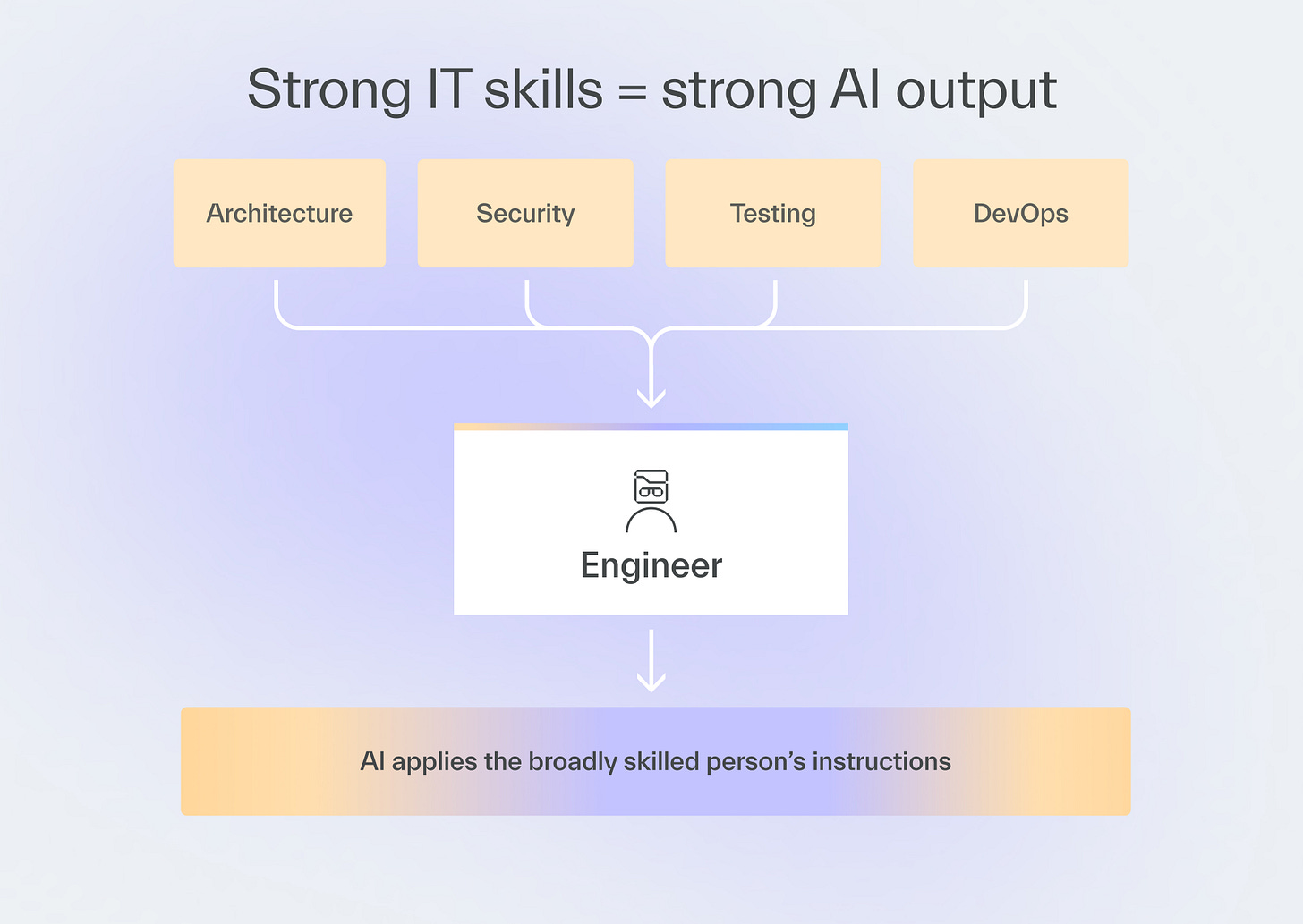

The diversity of these microoperations means that the best AI developers often have broad knowledge rather than deep specialist expertise in one field.

One of our top AI users is a DevOps expert and a manager who hasn’t coded in 10 years.

AI needs your constant attention

When dealing with AI, developers must stay on guard, not just during development but also outside of it.

The AI field is still nascent and evolving fast.

If your developers don’t have time to research and introduce innovations, your tried-and-tested AI approach may quickly become obsolete.

In the past, there were no standardized approaches to architecting LLM-based solutions.

We were reduced to simply writing prompts or creating our own systems, which took a lot of time.

When concepts such as Retrival-Augmented Generation (RAG) and agentic systems emerged, we could develop complex LLM features more quickly.

Empowering AI adoption

With so many considerations, you may now think that mastering AI tools is too hard.

But your engineers get there as long as you remember two rules.

Build a culture of tight verification

Your AI developers must use their brains when prompting AI and verify its inputs at every step.

Have them pay special attention to the high-level outcomes that AI cannot verify on its own.

Give the champions more freedom

Developers who verify their work and achieve excellent productivity by using multiple agents deserve more freedom with AI tools.

It will allow them to reach new heights in productivity.

Our copilot-collection provides that freedom by making it easy to customize workflows and modify outputs by hand.

We did it to empower our engineers.

Developers with limited experience are better off with a stricter framework that doesn’t allow for deviations from the workflow, minimizing failure risk.

Upskill engineers in 30 days →

Next time

Adam will show you how some incredible AI champions achieve 300% or even 1000% (for real!) of the output of regular AI users.

Can you find a champion like this within your own team?

If you want to find out and you haven’t subscribed yet, be sure to do it now!