Don’t fear AI code reviews

Humans need AI to keep up with the tempo.

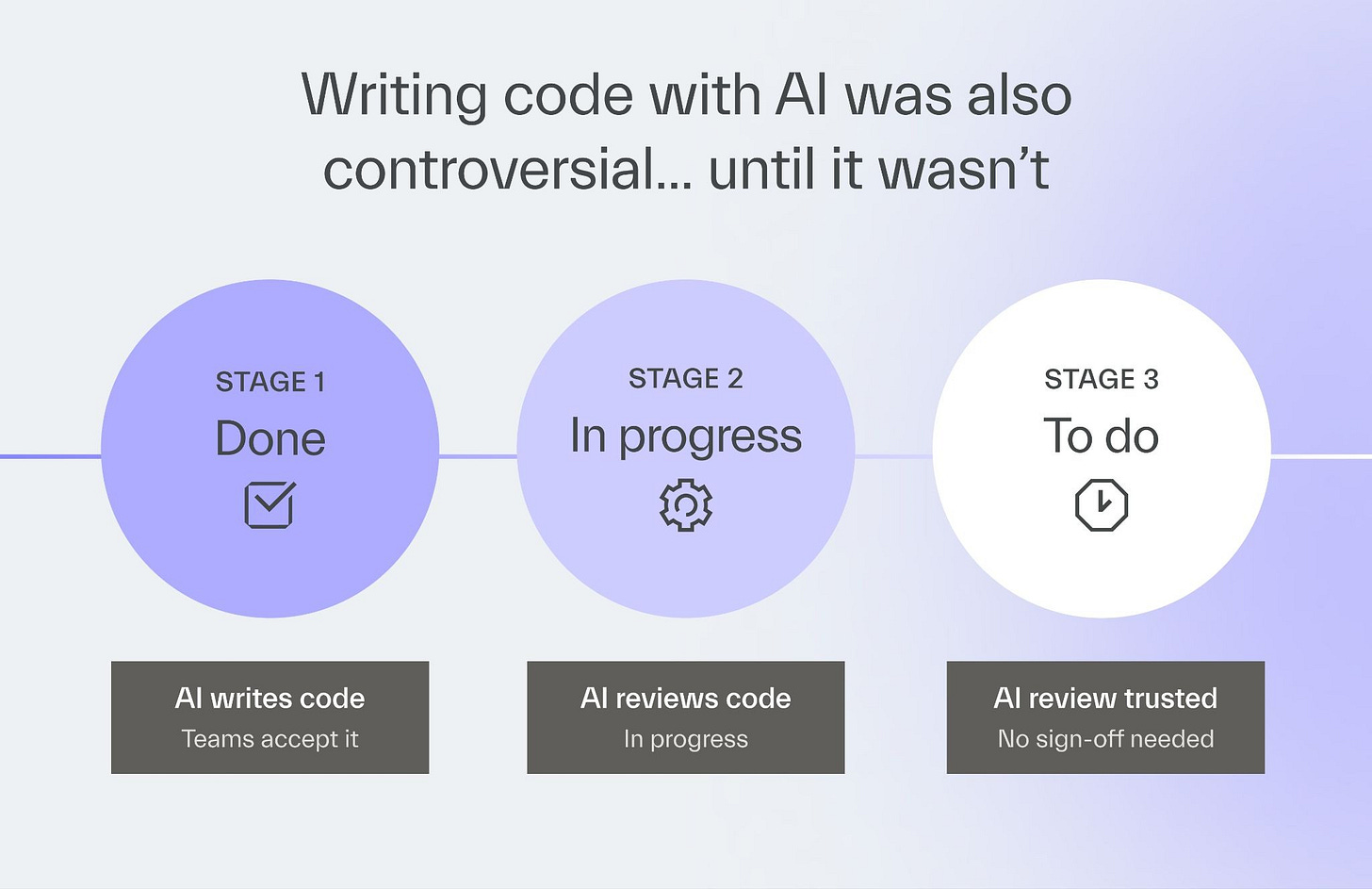

This is “Effective Delivery” — a newsletter from The Software House about improving software delivery through smarter IT team organization.

It was created by our senior technologists who’ve seen how strategic team management raises delivery performance by 20-40%.

TL;DR

AI generates tons of code, leading to review issues,

A human-AI hybrid approach to reviewing works well,

If AI misses a bug, refine the process instead of abandoning it,

100% AI reviews will become common in the future.

Contents

Hey! Adam here.

Code generation is now lightning-fast, and human reviewers can’t keep up.

And yet, many companies insist that humans are essential to the review process.

If that were true, AI-driven development is inherently flawed.

After all, what’s the use of generating code fast if it’s stuck in the review anyway?

The problem with AI code

Let’s put aside whether a human is a necessary element of a code review for a moment.

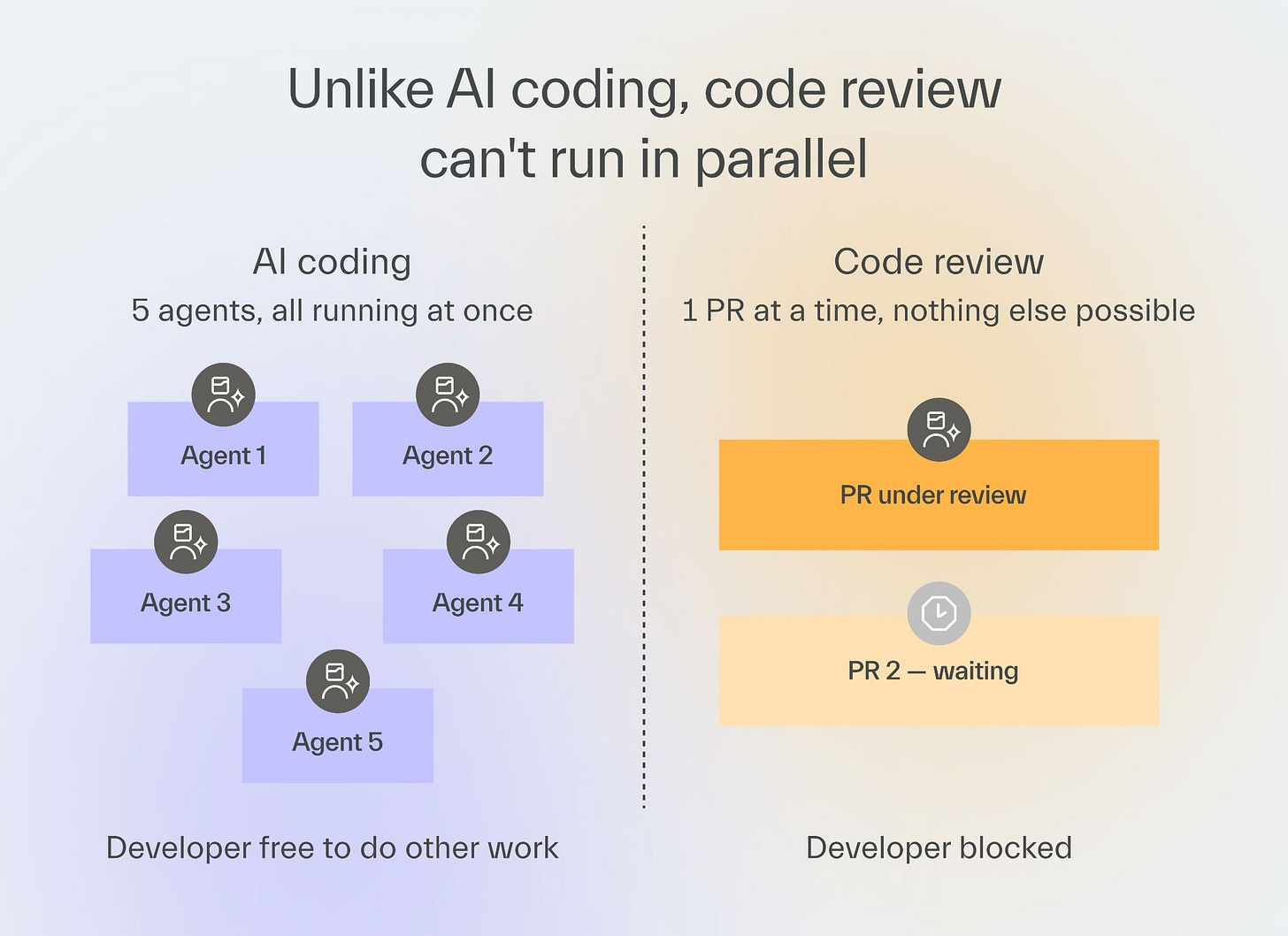

The problem with reviews in AI-driven projects is the sheer volume of code.

When a developer runs 5 agents in parallel, the queue of PRs grows fast.

While the code is being generated, the developer may step away to get a cup of coffee or play a game of paddleball.

But reviewing every single one of these PRs may require a human’s attention, depending on how the review process is set up.

AI shifts the ratio of code written to code reviewed, and the review side cannot keep pace.

The result is that either the review takes longer, or it gets less thorough.

AI and humans can review together

Let’s assume that we don’t want to eliminate a human from the code review process entirely.

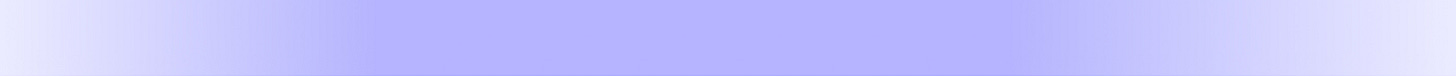

The most practical solution is to add a routing step to the CI/CD pipeline.

A simple version of it could work as follows:

After a developer opens a pull request, an AI agent reviews the change and provides a go/no-go signal.

A go means the developer merges without waiting for a human.

A no-go means a human reviewer steps in, sees what the AI flagged, and decides.

That way, developers skip the queue on low-risk changes, and human attention goes where it is needed.

AI reviews require clear rules

There are at least two things you need to make sure of for that process to work well.

1) You need to have clear standards for a review.

For example, a button color change may carry a different risk than a change to a payment flow.

For the button, an AI approval is fine.

For a payment flow, I want a human responsible for the outcome.

If your IT team reviews code the same way every time, you have a standard you can automate.

2) You need to write down all the rules.

If there are clear, repetitive patterns in your review process, you turn them into rules and make them accessible to AI agents, they can, in principle, handle routing and reviewing.

Our own AI framework, copilot-collections, is an example of that.

Copilot-collections offers a 4-step development process, with a separate AI agent responsible for each step.

One agent implements a feature and a second reviews it against acceptance criteria.

If something does not pass, the agent sends feedback to another agent, which fixes the issue automatically if possible

The future of code reviews

We now know that a human and an AI can collaborate on code reviews.

But let’s now remove the assumption that a human is necessary in the review process at all.

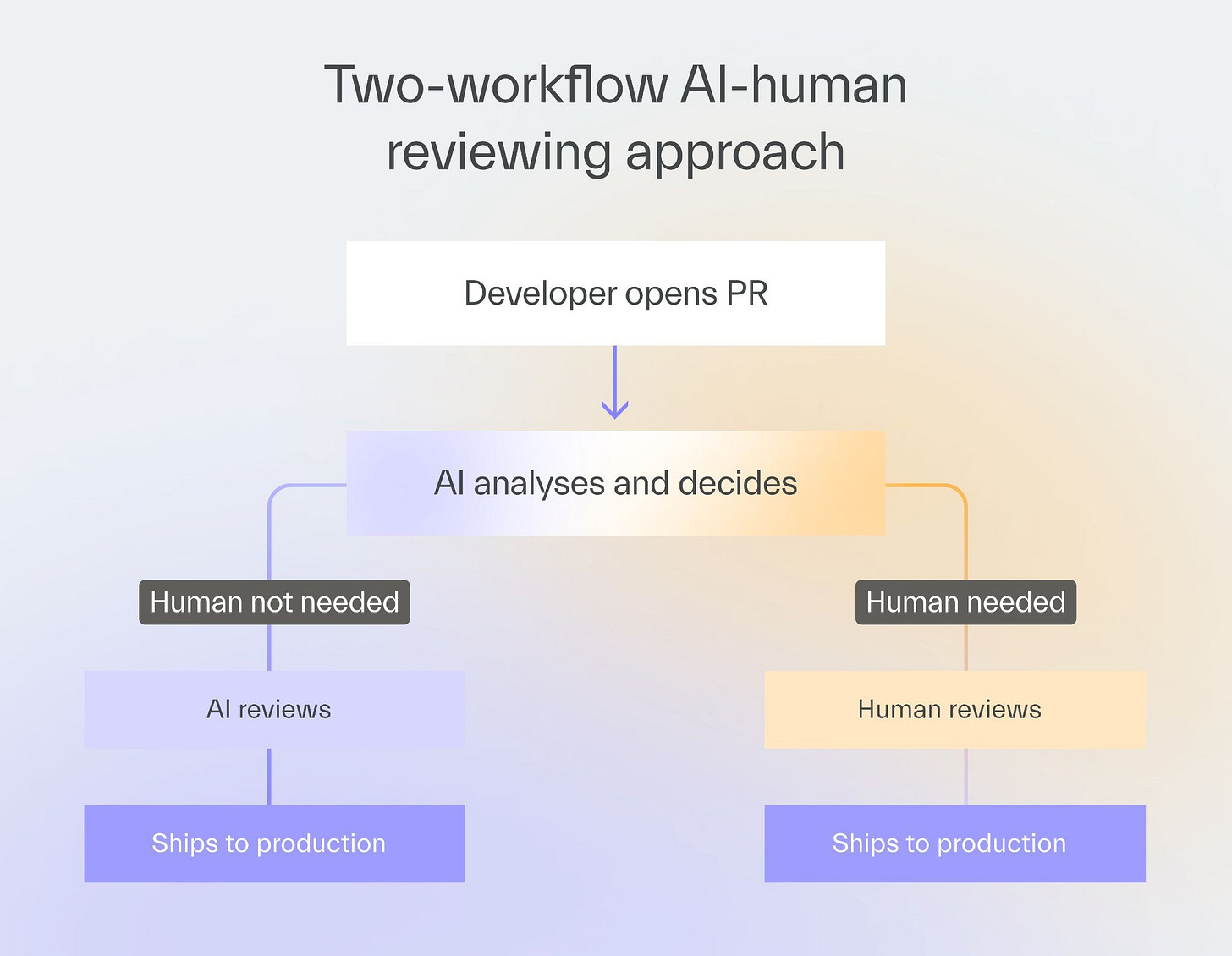

Many people hold the view that code has not truly been reviewed unless a human has looked at it.

I believe that it is a cultural, rather than a technical, issue and stems from AI’s novelty.

Just like a self-driving car having an accident causes an uproar, we hold AI to a stricter standard, even when AI makes fewer errors overall.

Security vulnerabilities exist in codebases today that passed human review without being flagged.

Assigning a human to code reviews does not guarantee quality.

Most IT teams have accepted that AI-written code can reach production.

Accepting that AI-reviewed code can also be well-reviewed code is the next step, and it will happen the same way it always does, gradually, as teams see it work.

When an AI review problem occurs, it is a reason to improve the process rather than abandon it.

Next time

Waiting for AI to generate code may be a good time for a developer to take a breather.

But if these “AI breaks” are frequent, your developers could consider doing something productive during that time instead.

My friend Andrzej will share his best ideas for developers to use during that time in the next episode.

Now, that is something worth waiting for!

Check out one of our most popular pieces!

Help us pay the bill for bitcoin mining